How Sakana AI Is Replacing Hardcoded Agent Frameworks With RL Orchestration

1. The Maturing Reality of Multi-Agent Systems in 2026

Let us start with an industry observation: current multi-agent AI architectures are increasingly showing limitations in production environments. In my analysis of enterprise AI deployments over the last three years, companies have invested heavily in building complex agent frameworks using popular routing libraries. The promise was autonomous productivity. The reality, however, is often a rigid system of static routing nodes that requires constant maintenance when user query distributions shift.

Engineering teams have recognized the operational cost of managing these systems. When you deploy a planner agent, an executor agent, and a verifier agent, wiring them together manually creates substantial overhead. This phenomenon, recently highlighted in academic research and characterized by industry analysts as the “swarm tax,” causes API bills to escalate because multiple agents frequently pass massive, redundant context windows to one another. The system can become confused by edge cases, leading developers to spend critical engineering hours debugging control logic that was intended to be autonomous.

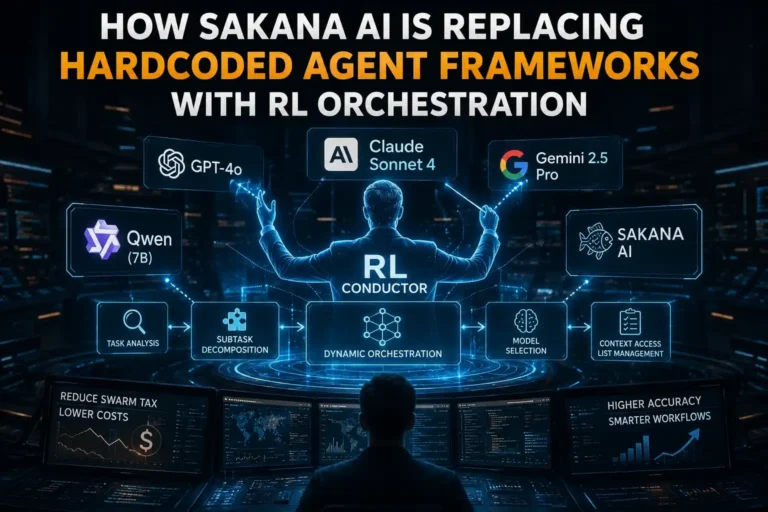

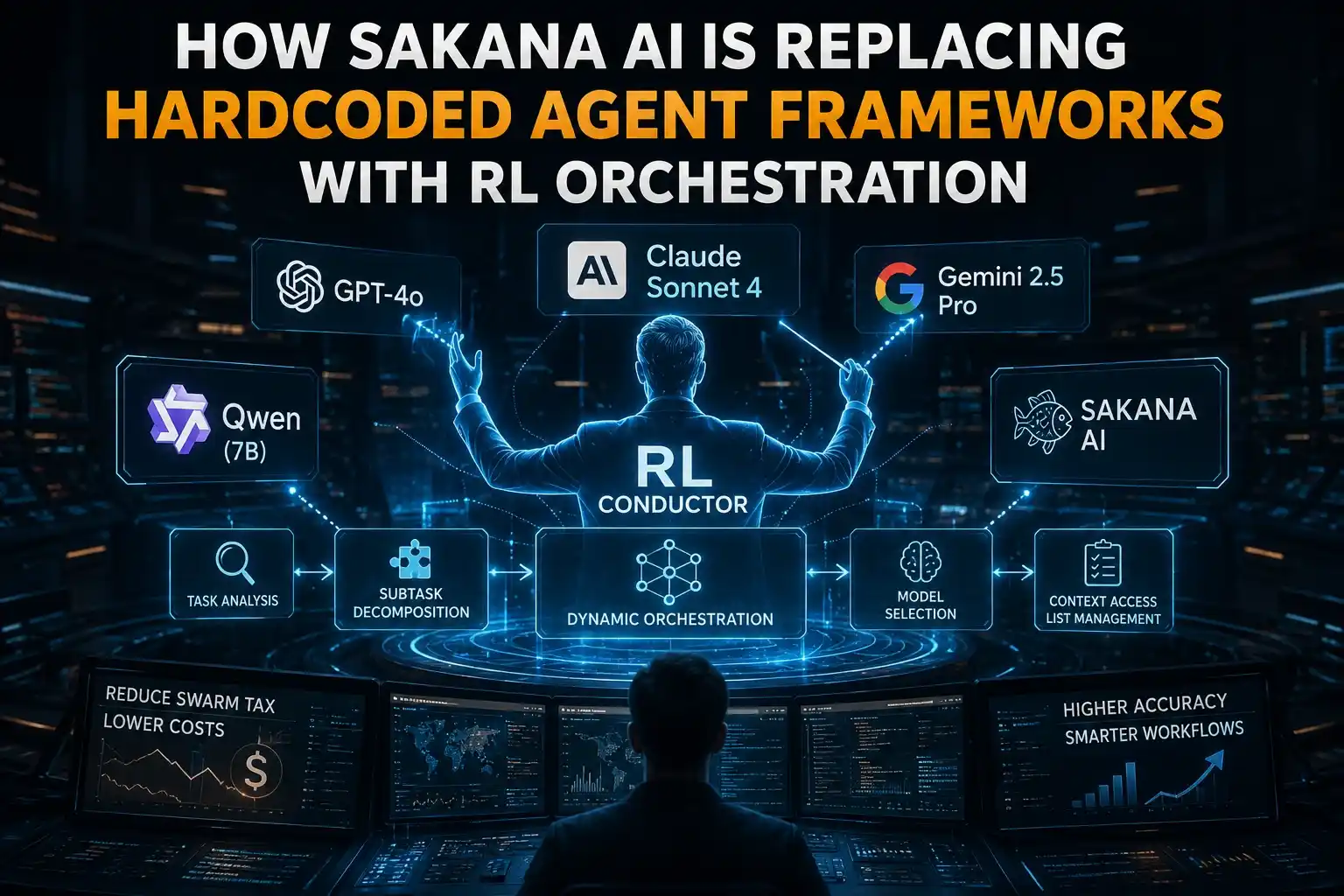

This approach is increasingly showing limitations for scaling complex workloads. According to early reporting from platforms like VentureBeat, researchers at Sakana AI represent one of the most ambitious orchestration experiments currently public. They have reportedly introduced the RL Conductor, a specialized 7-billion parameter language model trained via reinforcement learning. Its function is to act as a dynamic, natural language orchestrator for major foundation models, including the latest GPT-series models, Claude Sonnet 4, and Gemini 2.5 Pro. It does not simply route queries based on intent classifiers; it organizes workflows autonomously. In this analysis, I will examine the mechanics of Sakana’s RL Conductor, evaluate its commercial counterpart (Sakana Fugu), and provide a strategic assessment of its viability for your enterprise infrastructure.

2. What is Sakana’s 7B RL Conductor?

To accurately assess this system, we must examine its underlying architecture. The RL Conductor is not intended to function as a standalone reasoning engine for end-user queries. It is a highly specialized, 7-billion parameter model—built upon the Qwen architecture—trained specifically for management and delegation. It treats standard API endpoints for frontier models as its operational workforce.

According to Sakana’s published research (reportedly accepted for ICLR 2026), when a complex prompt enters the system, the Conductor analyzes the input and autonomously divides the problem into subtasks. It then assigns each subtask to the most appropriate worker model in its available pool. For example, the system might identify that Claude Sonnet 4 is optimal for initial architectural planning, Gemini 2.5 Pro is best suited for extensive document retrieval, and a frontier OpenAI model is required for final code optimization.

Data indicates that this is a significant departure from traditional semantic routing systems. Older methods typically classify a user’s intent and send the entire prompt to a single, predetermined model. The Conductor builds bespoke communication topologies for every individual input. Depending on the complexity of the request, it can spin up a simple sequential chain, a parallel execution tree, or complex recursive loops. It executes this without requiring a human-authored script dictating the exact flow of operations.

In simpler terms, the Conductor behaves less like a traditional chatbot and more like an autonomous operations manager for your existing AI infrastructure. For non-engineering teams, the practical implication is lower computational waste and fewer manual routing rules to maintain.

3. The Core Innovation: Natural Language Orchestration

In enterprise pipeline architecture, the primary bottleneck often lies in inter-agent communication. Historically, developers forced large language models to communicate via strict JSON schemas or rigid API contracts. While reliable for traditional software, this heavily restricts the inherent flexibility of natural language models.

Sakana AI’s research suggests that the most efficient method for managing language models is through language itself. The RL Conductor coordinates its pool of models using dynamically generated natural language instructions. For any given step in an enterprise workflow, the 7B model executes three primary operations:

- Adaptive Task Delegation: The Conductor generates a highly specific prompt tailored to the exact subtask required. It effectively functions as an automated prompt engineer, drafting contextual instructions on the fly based on the specific constraints of the problem.

- Dynamic Agent Selection: It evaluates its available pool of models and selects the optimal agent based on the difficulty and requirements of the subtask. For a simple data extraction task, it may route the query to a faster, open-weight 8B model. For a rigorous mathematical proof, it escalates the task to a heavy-reasoning model.

- Precision Context Management (Access Lists): This feature directly addresses the computational bloat of multi-agent systems. Instead of injecting the entire conversation history into every agent’s context window, the Conductor defines an “access list.” It actively curates which past subtasks and prior responses are relevant to the current agent’s assignment. This method of managing context is far more efficient than brute-force processing. For a deeper understanding of memory retention strategies, you can explore recent analyses on managing context windows and instant memory retrieval.

4. The Power of Reinforcement Learning over Human Intuition

The core technical question is how a 7B parameter model effectively coordinates models significantly larger than itself. Sakana’s approach moves away from imitation learning based on human datasets, relying instead on end-to-end reward maximization via Reinforcement Learning (RL).

When engineering teams hardcode multi-agent frameworks, they inherently limit the system to human cognitive patterns, which typically follow a linear sequence: plan, execute, review. During its training phase, the Conductor was provided with complex tasks, a diverse worker pool, and a definitive reward signal tied to the accuracy and formatting of the final output.

Through iterative RL training, the model discovered which combinations of instructions and agent pairings yielded the highest rewards. It learned that static routing paths are often suboptimal for varied input distributions. By optimizing through reinforcement learning rather than manual human design, the Conductor developed highly adaptive routing strategies. This dynamic flexibility is a primary reason why traditional, human-engineered pipelines are facing increased scrutiny in high-stakes environments.

5. Recursive Test-Time Scaling: A Strategic Failsafe

One of the most notable features detailed in Sakana’s reporting is their implementation of “Recursive Test-Time Scaling.” This concept introduces a critical shift in how compute allocation is managed during model inference.

In traditional setups, an AI generates an answer, the processing concludes, and the output is served to the user. Sakana’s Conductor, however, has the architectural capability to select itself as a worker in the pool. This allows the system to review its own execution pipeline.

Consider a software engineering scenario: The Conductor orchestrates a team to solve a complex backend bug. An executor model writes the patch, and a verifier model runs an analysis. If the verifier flags an issue, the Conductor can read the output, recognize the failure state, and instantaneously generate a corrective workflow. It dynamically allocates more compute time to the problem until the established reward criteria are met. Test-time scaling may reduce certain classes of reasoning failures compared to single-pass inference by acting as an internal quality assurance loop.

6. Performance Data: Benchmarks That Matter

Enterprise adoption requires verifiable data. Preliminary benchmark data indicates significant improvements in complex problem-solving environments when using this orchestration method.

By coordinating a mix of proprietary and open-weight models, the 7B Conductor reportedly achieved notable composite scores on several rigorous industry benchmarks compared to baseline frameworks.

| Benchmark Evaluation | Legacy Mixture-of-Agents (MoA) Baseline | Sakana 7B RL Conductor |

|---|---|---|

| AIME 2025 (Advanced Mathematics) | 88.2% | 93.3% |

| GPQA-Diamond (Graduate-Level Science) | 83.5% | 87.5% |

| LiveCodeBench (Real-world Software Engineering) | 79.8% | 83.9% |

| Overall Task Average | 73.1% | 77.27% |

According to Sakana’s published materials, the Conductor frequently outperformed individual frontier models by selectively combining their specialized strengths. Additionally, it proved more efficient than standard Mixture-of-Agents (MoA) frameworks. While MoA relies on redundant processing by forcing multiple large models to generate sequential responses, the Conductor applies targeted compute precisely where it is required.

7. Cost vs. ROI Analysis: Mitigating the “Swarm Tax”

For infrastructure leads and financial decision-makers, benchmark performance must be weighed against unit economics. A primary vulnerability of agentic deployments is unpredictable cost scaling. Unoptimized agents can get caught in recursive loops, dragging extensive token context windows through multiple API calls.

Sakana’s orchestration method directly addresses this efficiency gap. Because the Conductor actively manages the “access list” for each agent, it aggressively prunes the data sent in each API request. It ensures that a model debugging a specific function only evaluates the relevant code snippet, rather than the entire conversational history.

The system also dynamically scales model usage based on inherent task difficulty. According to early analysis, it routinely assigns simpler, factual queries to cost-effective open-weight models, reserving premium frontier models exclusively for complex reasoning tasks. For a broader understanding of how these efficient models are impacting the enterprise ecosystem, it is worth analyzing how open-weight models stack up against US AI titans. The resulting ROI implication is clear: enterprises can achieve high-tier accuracy across their application surface while maintaining a significantly lower blended average token rate.

8. Evaluating Sakana Fugu: The Commercial Reality

While academic papers outline the underlying research, Sakana AI has reportedly productized this orchestration technology via a commercial service called Sakana Fugu. For teams spending disproportionate engineering hours maintaining brittle routing logic, this type of managed service warrants evaluation.

Currently noted to be in early beta access, Fugu operates as an API endpoint designed to interface with existing infrastructure. Instead of managing disparate API keys for Anthropic, Google, and OpenAI, and maintaining the associated routing logic, developers route prompts to a single endpoint. The orchestration and model selection occur autonomously on the backend.

According to preliminary external reporting, Fugu is being evaluated in two primary architectural variants to suit different enterprise workloads:

- Fugu Mini: Optimized for lower latency applications. It relies heavily on a pool of highly efficient, hyper-fast open-weight models, escalating to mid-tier proprietary models only when required. This configuration is suitable for rapid data extraction, high-volume customer support routing, and processes where strict latency constraints are enforced.

- Fugu Ultra: The comprehensive orchestrator designed for maximum reasoning capability. It manages the full spectrum of models, including the latest iterations of GPT-series models and Gemini. Fugu Ultra is engineered for asynchronous, deeply complex workloads such as automated codebase refactoring, complex financial analysis, and rigorous scientific research. The latency is naturally higher, but the cognitive output ceiling is maximized.

9. Systemic Limitations: Where Fugu Requires Oversight

As an analytical product evaluation, it is necessary to highlight the systemic risks introduced by deploying an autonomous orchestrator. Shifting from hardcoded logic to RL-driven management requires distinct mitigation strategies.

1. Latency Accumulation in Synchronous UX: Even with optimized API calls, recursive workflows inherently increase processing time. If the Conductor determines a query requires a multi-step pipeline incorporating test-time scaling, the end-user may experience response delays of 15 to 30 seconds. This is generally unacceptable for synchronous, real-time chat interfaces. Applications utilizing high-tier orchestration must be architected for asynchronous task completion.

2. Abstraction and Loss of Granular Control: By utilizing managed orchestration APIs, an enterprise introduces a significant layer of abstraction. You do not control the exact routing logic. If the system autonomously routes a specific proprietary workload to a model that historically underperforms on your unique internal data syntax, you have limited levers to manually override the selection process. You are trading precise deterministic control for generalized autonomous efficiency.

3. Compounded Infrastructure Dependencies: The orchestration system is entirely dependent on the uptime and stability of the underlying worker models. While a dynamic orchestrator can reroute around a specific provider outage, subtle changes to an underlying model’s syntax or API structure could temporarily disrupt the workflow topology before the system adapts.

Why Some Enterprises May Resist Autonomous Orchestration

Despite the operational efficiency, it is crucial to recognize why highly regulated sectors might resist this shift. In industries like finance or healthcare, auditability and deterministic workflows are paramount. When an RL model autonomously decides how to route a query, tracking the exact decision matrix for compliance purposes becomes challenging. For organizations requiring strict observability to meet legal mandates, the “black box” nature of dynamic routing introduces operational risks that manual, hardcoded frameworks—despite their inefficiencies—currently avoid.

10. Implementation Strategy: A Roadmap for CTOs

If organizational data indicates that migrating toward natural language orchestration is a strategic necessity, implementation must be methodical. Ripping out existing infrastructure abruptly introduces unnecessary risk. I recommend the following phased integration plan.

Step 1: Conduct an API Efficiency Audit

Establish a strict baseline before integrating new orchestration tools. Measure the exact token expenditure per query in your current pipelines. Identify the top 20% of workflows that consume the vast majority of your compute budget. These highly inefficient pipelines should be the initial targets for optimization.

Step 2: Document Workflow Failure States

Catalog the specific input distributions that currently cause your automated agents to fail. Document instances where verifier agents miss edge cases or where planner agents enter infinite loops. This failure data is crucial for measuring the baseline accuracy that any new orchestration system must surpass.

Step 3: Deploy Tiered Routing in a Sandbox Environment

Before moving to full autonomous orchestration, build internal muscle memory by implementing basic tiered routing. Use a lightweight classifier to categorize incoming queries by complexity. Route simple data processing to efficient open-weight models and reserve premium models strictly for complex analysis. This prepares your infrastructure for mixed-model environments.

Step 4: Execute a Shadow Deployment

When gaining access to advanced orchestration endpoints, initiate a shadow deployment. Mirror a subset of your production traffic to the new API and log its responses and latency metrics alongside your legacy system. Compare execution accuracy and blended API costs. Only transition live traffic once the orchestrator demonstrates a verifiable, consistent reduction in cost-per-successful-query.

11. The Verdict: Build, Buy, or Monitor?

To conclude this analysis: the viability of manual, graph-based agent orchestration is narrowing. Forcing human-designed sequence logic onto advanced neural networks often creates unnecessary friction and fragility in scaling environments. The published research surrounding RL orchestration suggests that using natural language as a connective management layer provides a more resilient alternative.

Should an enterprise build its own RL Conductor? Only if the organization possesses an elite AI research division and highly specialized, proprietary data that cannot be processed via commercial endpoints. Training a capable orchestration model via RL requires meticulous reward shaping and substantial computational resources.

Should enterprises ignore this development? No. As autonomous orchestration systems mature, they offer a clear pathway to higher quality software execution and data analysis at a reduced blended token cost. The integration of test-time scaling provides a layer of quality assurance that static single-pass models cannot replicate.

Should you adopt systems like Sakana Fugu? If your engineering team is struggling with the brittleness and escalating costs of current agentic frameworks, monitoring these public betas is a sound strategy. It represents a streamlined method for accessing the collective capabilities of the industry’s best models without the crushing management overhead. The larger strategic implication is that intelligent orchestration may become significantly more important than raw model size over the next phase of enterprise AI competition.

12. Frequently Asked Questions (FAQ)

What is the Sakana RL Conductor?

The RL Conductor is a 7-billion parameter language model reportedly developed by Sakana AI. Instead of generating final answers for users, it is trained using reinforcement learning to autonomously orchestrate, manage, and delegate tasks to a pool of larger foundation models like the GPT-series and Claude.

How does AI orchestration differ from semantic routing?

Traditional semantic routing classifies a user’s intent and sends the entire prompt to a single corresponding model. AI orchestration dynamically breaks a complex prompt into subtasks, assigns each part to different specialized models based on their strengths, and manages the communication between them to synthesize a final result.

What is test-time scaling in AI inference?

Test-time scaling allows an AI system to allocate more computational resources to a problem during the inference (generation) phase. In an orchestrated system, the primary model can review the output of its worker agents, detect logical failures, and autonomously spin up corrective workflows until the correct answer is achieved, rather than failing after a single attempt.

Why are traditional multi-agent systems considered expensive?

Traditional multi-agent systems often suffer from the “swarm tax.” Because they are manually wired together, they frequently pass massive, redundant context windows back and forth between multiple agents for every single step of a task, leading to exponentially higher API token costs.

Is Sakana Fugu available for public enterprise use?

According to early industry reporting, Sakana AI has initiated beta testing applications for Sakana Fugu in 2026. It is positioned as an API endpoint intended to replace manual API key management and hardcoded routing frameworks for enterprise developers.